Kubernetes integrates with a large toolset built for and around containers and uses it in its own operations. Kubernetes is not used to create the application containers it actually needs a container platform to run, Docker being the most popular one. In cases where the application is broken down into multiple microservices, each one with their own lifecycle and operational needs, Kubernetes comes into play. In cases where the application’s architecture is fairly simple, Docker can address the basic needs of the application’s lifecycle management. It actually helps build and deploy the application’s container(s).

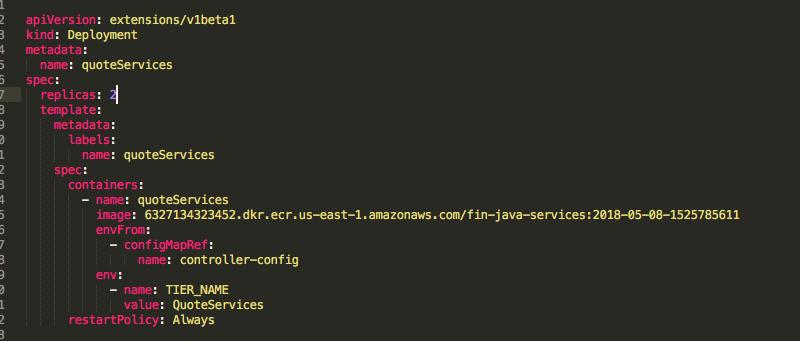

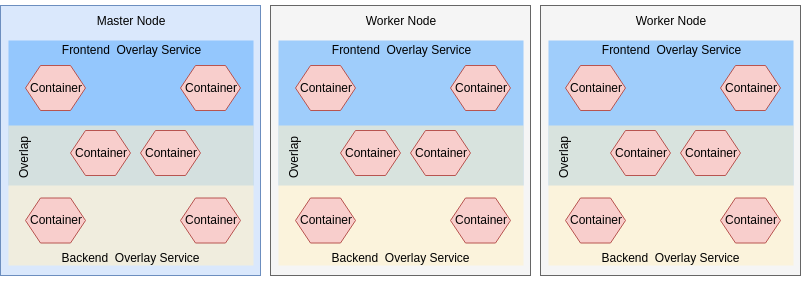

So if ‘Docker is containers’ and ‘Kubernetes is container orchestration’ why would anyone ask “Should I use Docker or Kubernetes”? In reality, the two tools are actually complementary to each other and help build cloud-native or microservice architectures.ĭocker is mostly used during the first days of a containerised application. What is the relationship between Docker and Kubernetes? This level of automation has revolutionised the container space as it created the framework for features such as scalability, monitoring and cross-platform deployments. Following user input, Kubernetes can deploy and manage multi-container applications across multiple hosts, taking action if needed to maintain the desired state. Kubernetes allows users to define the desired state of their container architecture deployment on various substrates. Kubernetes is an orchestrator of container platforms, such as Docker. As a result, the industry saw a significant spike in the use of containers, and consequently, businesses were now facing new challenges in managing and deploying them.Įnter Kubernetes, an open-source project made available by Google in 2014.

Developers started to build and deploy containerised applications, breaking down monolithic apps into microservices for resource optimisation and easier maintenance. The evolution of container technology has led to the growth of its own popularity.

#What is kubernetes vs docker compose software#

This results in faster delivery of the software and an increase in quality. It also helps developers move workloads from their local environment, to test up to production by removing the cross-environment inconsistencies and dependencies. Although Docker did not introduce a new concept, its ‘new way to deploy software’ and ‘faster time-to-market’ messaging appealed to users so much that Docker soon became shorthand for containers and the default container format.ĭocker streamlines the creation of containers with tools such as the dockerfile and docker-compose. It promised an easy way to build and deploy containers on the cloud or on-premises and is compatible with Linux and Windows. as an open source containerisation platform. Learn more about containers and their history in our e-book > What is Docker?ĭocker was launched in 2013 by Docker, Inc. Containers are a standard feature of the Linux kernel since the introduction of cgroups by Google in 2006.

#What is kubernetes vs docker compose portable#

Containers are more lightweight and portable than VMs, as they only virtualise the operating system instead of the hardware layer. They are often compared to virtual machines (VMs), as they provide similar resource isolation and allocation capabilities. A containerised application can be deployed easily and consistently on a local machine, a private data centre, a public cloud, or any other compute infrastructure. Containers package application software with their dependencies in order to abstract it from the infrastructure it runs on. Let’s start with a short definition of containers.